MRHaD: Mixed Reality-based Hand-Drawn Map Editing Interface for Mobile Robot Navigation

The University of Osaka / Kobe University

MRHaD

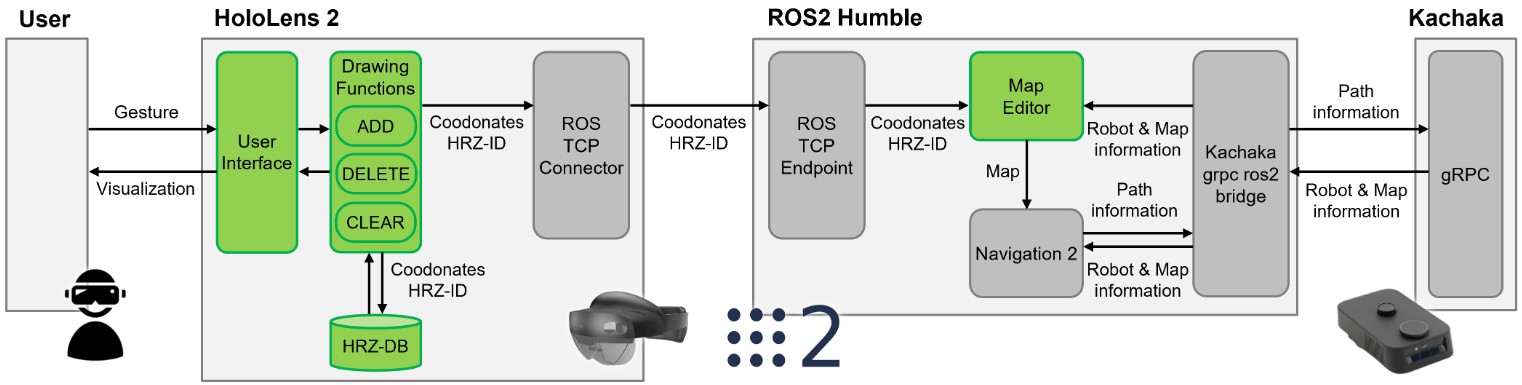

System Design

MRHaD System Design

Add

Delete

Clear

The system provides three core functions accessible via an on-screen menu:

- ADD: Draw a new HRZ.

- DELETE: Remove an existing HRZ.

- CLEAR: Remove all HRZ from the map.

Data Management:

HRZ data (ID and vertex coordinates) is stored in a JSON file within the Unity project. This file is:

- Loaded at system startup.

- Updated when HRZ are added, deleted, or cleared.

- Overwritten to reflect the latest state.

Experiments

2D Interface (Baseline) and MR Interface (MRHaD)

2D System

MRHaD

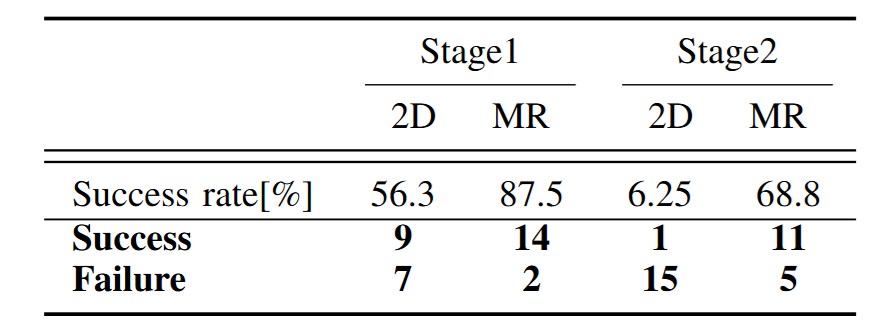

To evaluate the effectiveness of our system, we conducted an experiment to compare between our proposed system with the 2D system by using computer display and mouse as the baseline as it is more used in daily life.

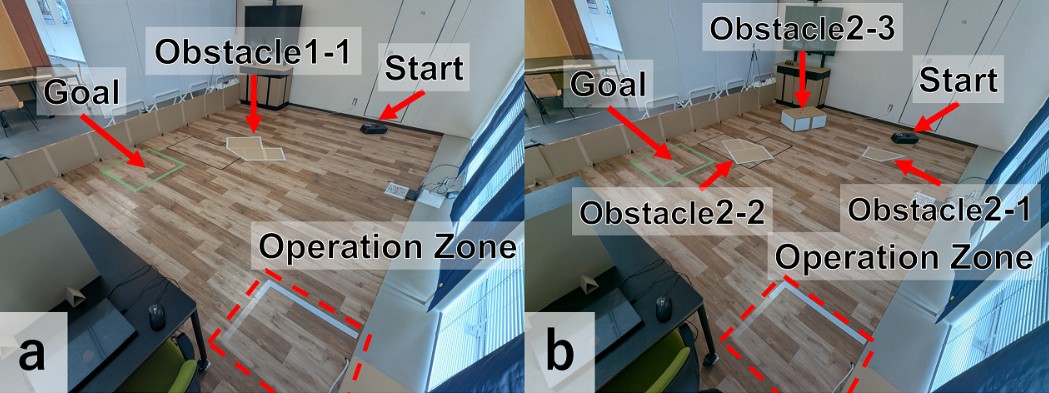

Environments

Task Flow:

- Participants face a wall to prevent prior exposure to obstacle locations.

- Upon a start signal, they observe the obstacles and draw HRZ using the assigned interface.

- They press the “Task Complete” button to trigger the robot’s navigation.

- The robot navigates to a predefined goal.

Results

Robot Navigation Success Rate

Robot Navigation Success Rate

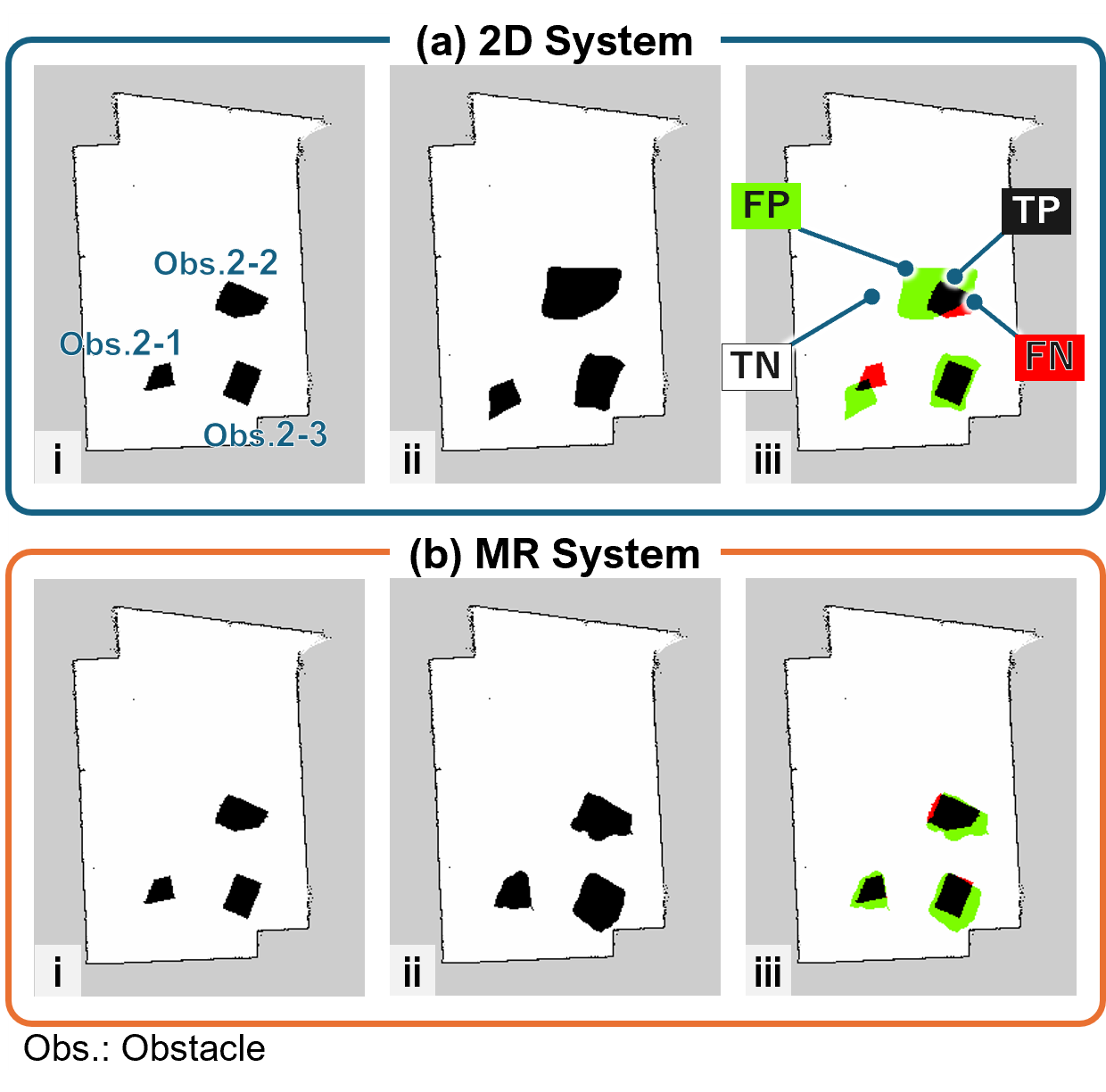

Map Reflection Accuracy

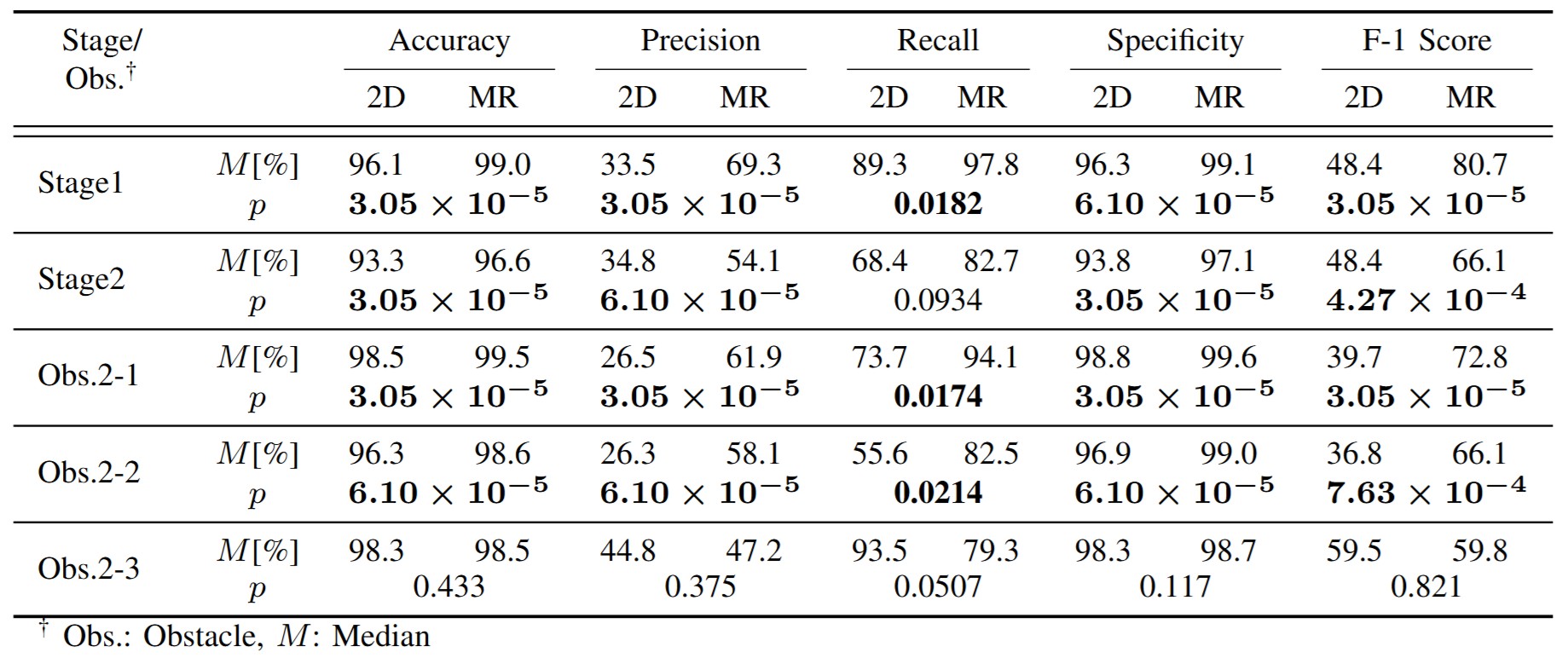

Map reflection accuracy was quantitatively assessed by comparing the SLAM-generated map (Ground Truth) with the participant-generated HRZ map (Drawn Map). Each pixel was classified into four categories

Map Reflection

- True Positive (TP): Cells classified as occupied in both the Ground Truth and the Drawn Map (excluding walls).

- False Positive (FP): Cells classified as free or unknown in the Ground Truth but classified as occupied in the Drawn Map.

- False Negative (FN): Cells classified as occupied in the Ground Truth but classified as free in the Drawn Map.

- True Negative (TN): Cells classified as free in both the Ground Truth and the Drawn Map.

Based on these classifications, the following metrics were calculated: Accuracy, Precision, Recall, Specificity, and F1-Score. Each metric represents the following:

- Accuracy: The proportion of correctly classified regions in the Drawn Map compared to the Ground Truth.

- Precision: The proportion of the restricted zones in the Drawn Map that correctly correspond to restricted zones in the Ground Truth.

- Recall: The proportion of restricted zones in the Ground Truth that were correctly reflected in the Drawn Map.

- Specificity: The proportion of navigable areas in the Ground Truth that were correctly maintained as navigable areas in the Drawn Map.

- F1-Score: The harmonic mean of Precision and Recall. \end{itemize}

In autonomous mobile robot navigation, safety is the top priority, followed by efficiency. Therefore, Recall was given the highest priority in evaluation, followed by Precision and Specificity.

In Stage1, Obstacle 2-1, and Obstacle 2-2, the MR system achieved superior scores across all metrics.

In terms of Recall, the MR system outperformed the 2D system with 97.8% compared to 89.3% in Stage1, 94.1% compared to 73.7% in Obstacle2-1, and 82.5% compared to 55.6% in Obstacle2-2. These significant differences (p<0.05) suggest that the MR system has fewer omissions in reflecting the Ground Truth of the impassable area. On the other hand, in Obstacle2-3, the Recall of the 2D system was higher at 93.5% compared to the MR system’s 79.3%, which affected the overall Recall value for Stage2 and resulted in no statistically significant difference. The height of Obstacle 2-3 and its distance from the participants likely made depth perception more challenging, resulting in positional errors during drawing with the MR system and insufficient coverage of the obstacle’s base.

Regarding Precision and Specificity, all obstacles except Obstacle2-3 showed higher values for the MR system compared to the 2D system, with statistically significant differences. These results indicate that the MR system has a lower rate of incorrectly marking areas that are actually passable as impassable, which means the MR system imposes fewer restrictions on the robot’s navigation range.

Furthermore, the MR system demonstrated higher median values with smaller variation among participants for Precision and Recall in Stage1 and Obstacle 2-2, while the 2D system exhibited a broader range extending to lower values. A similar trend was observed in the F1-score, which is the harmonic mean of Precision and Recall. This result is likely due to the fact that Obstacle 1-1 and Obstacle 2-2 had fewer corners or landmarks that could serve as positional references compared to other obstacles, resulting in larger drawing position errors.

These findings demonstrate that the MR system reduces the risk of the robot entering restricted zones while generating a map that more accurately represents the real environment.

Map Editing Time and Efficiency

- Task Completion Time: Although there was no statistically significant difference in overall task completion time between the two systems, the 2D interface exhibited greater variability across participants.

- Drawing Attempts: The MR system generally required fewer drawing actions—often completing the HRZ in a single attempt—indicating improved operational speed and efficiency.

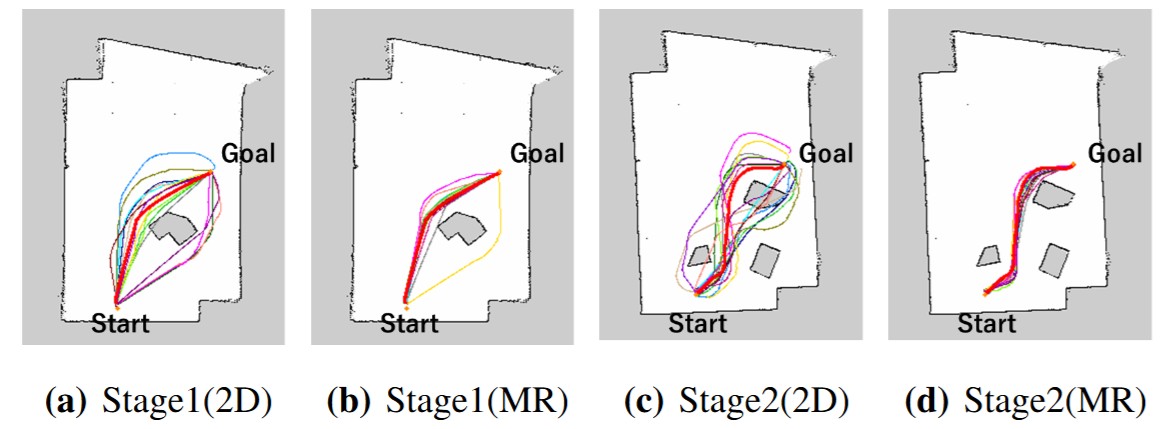

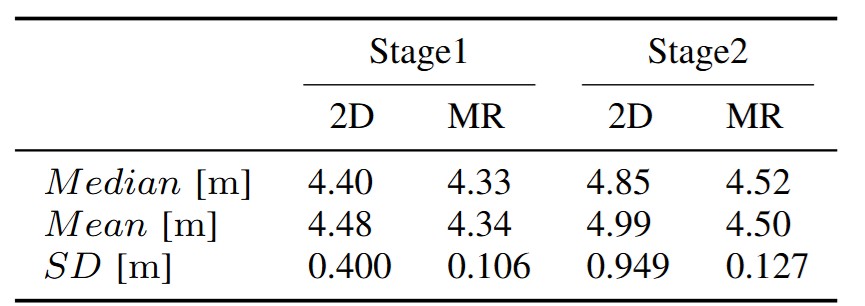

Global Path Efficiency

Global Path

These results indicate that improved map editing accuracy via the MR interface leads to more efficient and reliable global path planning.

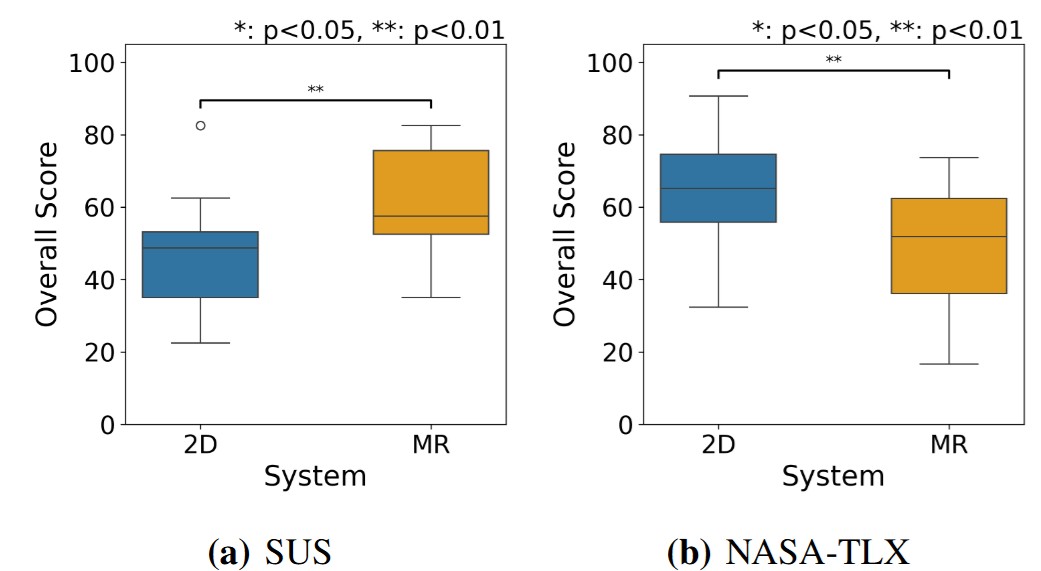

Cognitive Load

SUS and NASA-TLX

Overall, the MR system not only improved accuracy and efficiency in map editing but also reduced the mental effort required during the task.

Citation

@misc{taki2025mrhad,

title={MRHaD: Mixed Reality-based Hand-Drawn Map Editing Interface for Mobile Robot Navigation},

author={Takumi Taki and Masato Kobayashi and Eduardo Iglesius and Naoya Chiba and Shizuka Shirai and Yuki Uranishi},

year={2025},

eprint={2504.00580},

archivePrefix={arXiv},

primaryClass={cs.RO},

url={https://arxiv.org/abs/2504.00580},

}

Contact

Masato Kobayashi (Assistant Professor, The University of Osaka, Japan)

- X (Twitter)

- English : https://twitter.com/MeRTcookingEN

- Japanese : https://twitter.com/MeRTcooking

- Linkedin https://www.linkedin.com/in/kobayashi-masato-robot/