MRReP: Mixed Reality-based Hand-drawn Reference Path Editing Interface for Mobile Robot Navigation

The University of Osaka / Kobe University

Autonomous mobile robots operating in human-shared indoor environments often require paths that reflect human spatial intentions, such as avoiding interference with pedestrian flow or maintaining comfortable clearance. However, conventional path planners primarily optimize geometric costs and provide limited support for explicit route specification by human operators. This paper presents MRReP, a Mixed Reality-based interface that enables users to draw a Hand-drawn Reference Path (HRP) directly on the physical floor using hand gestures. The drawn HRP is integrated into the robot navigation stack through a custom Hand-drawn Reference Path Planner, which converts the user-specified point sequence into a global path for autonomous navigation. We evaluated MRReP in a within-subject experiment against a conventional 2D baseline interface. The results demonstrated that MRReP enhanced path specification accuracy, usability, and perceived workload, while enabling more stable path specification in the physical environment. These findings suggest that direct path specification in MR is an effective approach for incorporating human spatial intention into mobile robot navigation.

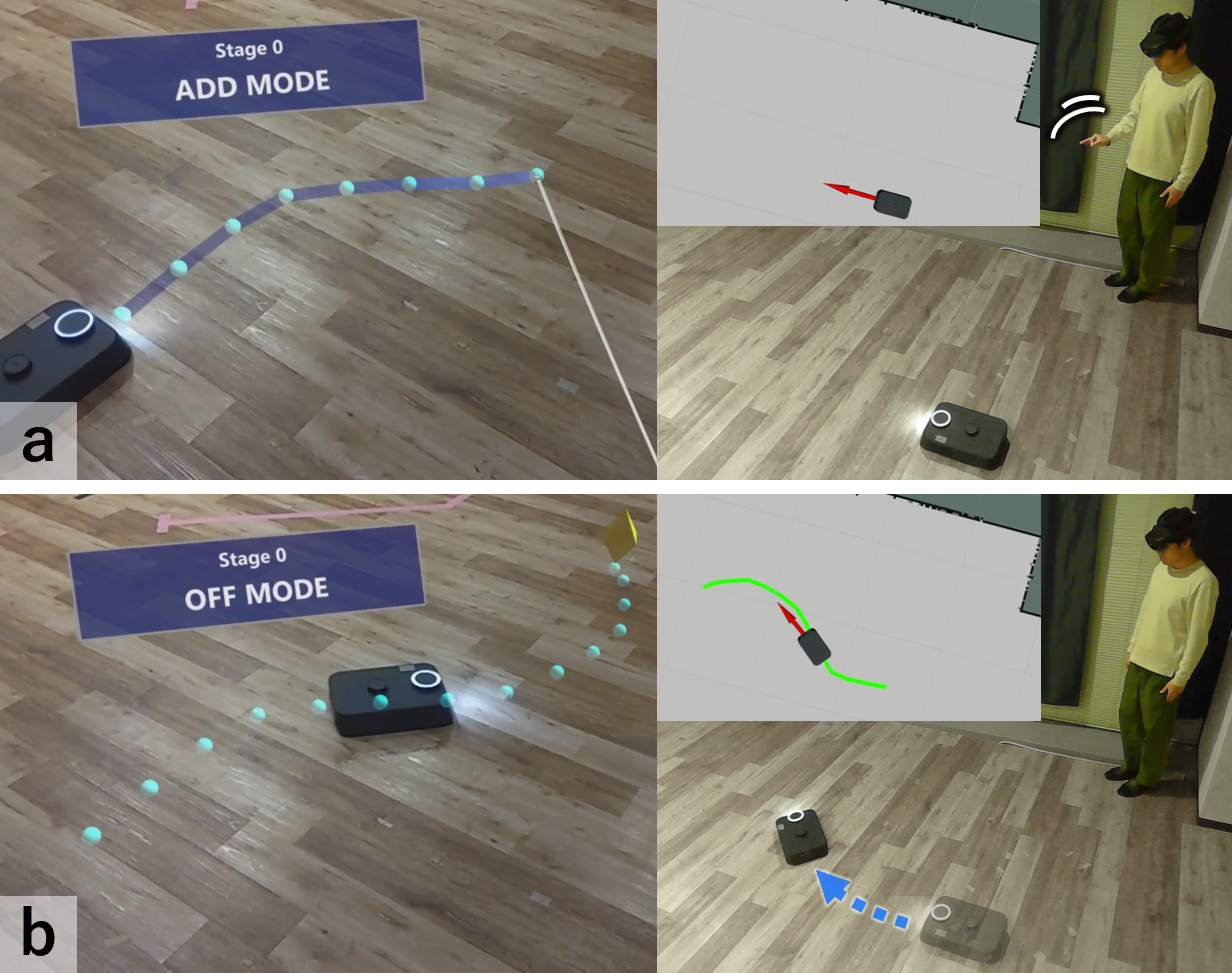

MRReP overview: (a) drawing an HRP on the floor in MR; (b) autonomous navigation along the specified path.

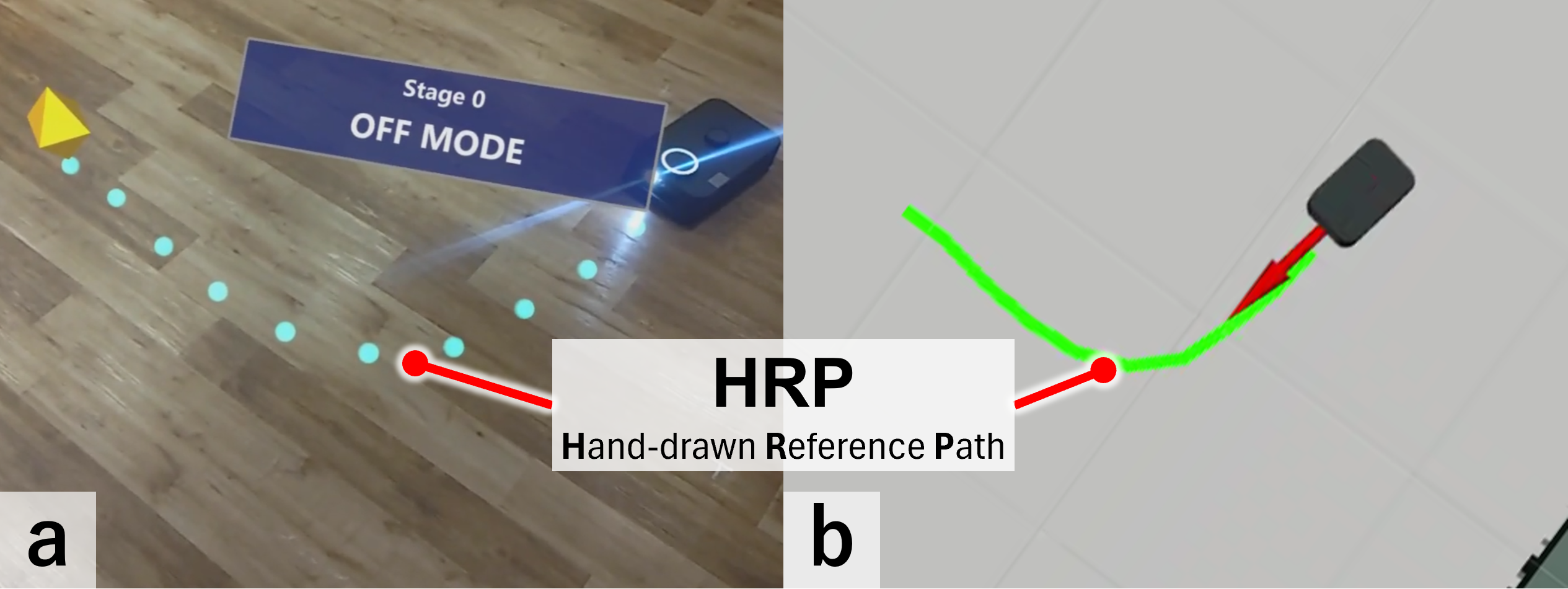

Example HRP in HoloLens 2 (yellow pin: goal) and the corresponding global path in ROS 2.

System Design

Users wearing a HoloLens 2 draw a Hand-drawn Reference Path (HRP) directly in the physical environment through hand gestures. The HRP is shown in the MR view and stored with its ID and coordinate sequence so that the previous state can be restored after reboot. When transmitted to ROS 2, the stored coordinates are converted into a global path for autonomous navigation.

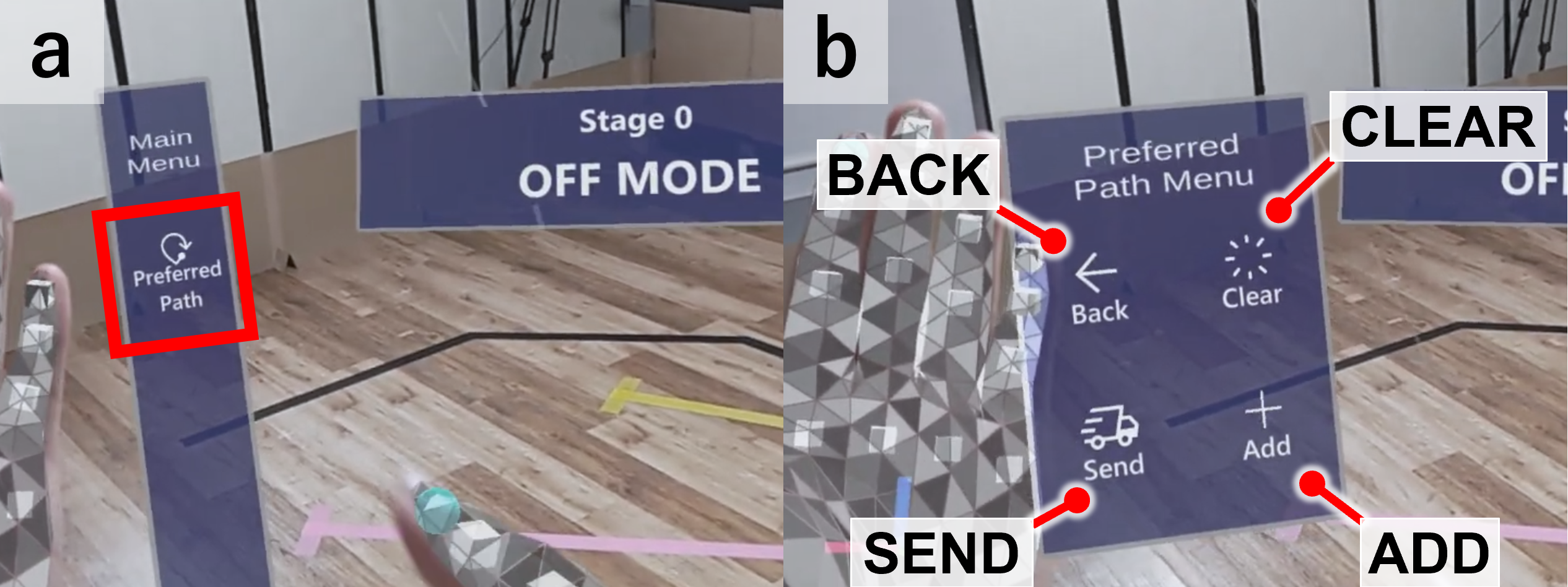

Menu panel: main menu and path operation modes.

The interface provides three functions for HRP manipulation: ADD, CLEAR, and SEND, corresponding to active modes in addition to an OFF mode. Users switch modes through the spatial menu panel.

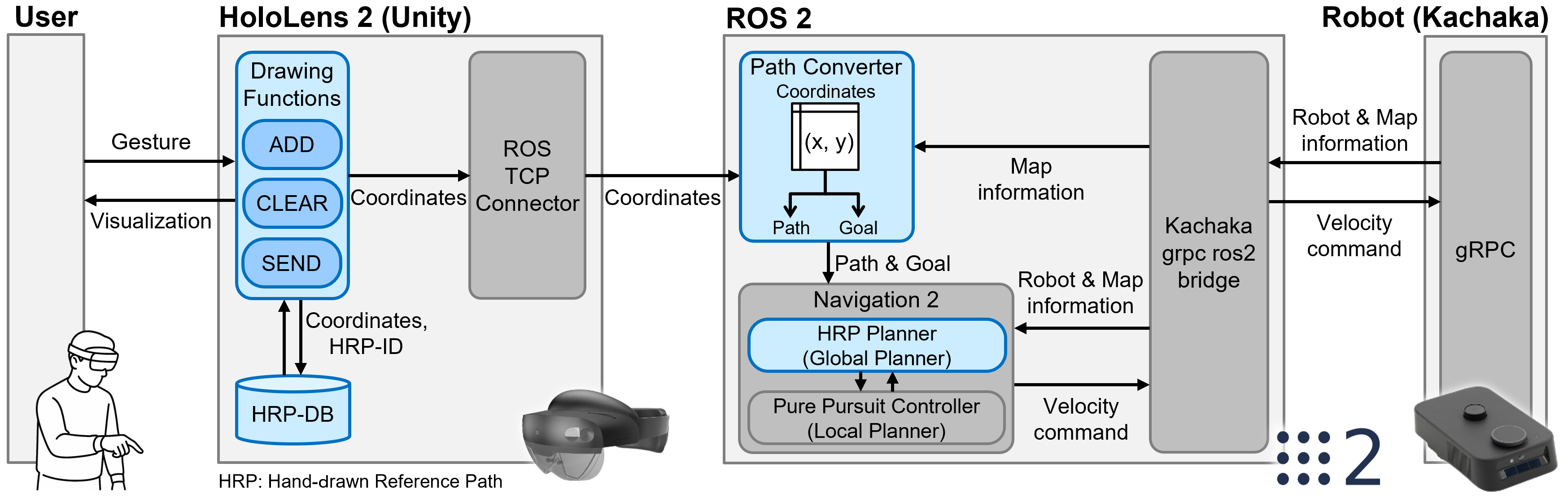

System architecture: HRP stored on HoloLens 2 is sent to ROS 2, converted to a global path and goal pose, and used in Navigation2.

ADD

In ADD mode, a floor cursor appears when the user extends the hand; maintaining a pinch gesture draws the HRP as a sequence of waypoints. Releasing the pinch completes the path and places a goal pin at the endpoint. The system assigns a unique ID and stores the coordinate sequence in the database.

ADD: floor cursor, pinch drawing, goal pin at endpoint.

CLEAR

CLEAR removes all HRPs. A confirmation popup prevents accidental deletion; on confirmation, scene objects and database records are cleared and the system returns to OFF mode.

CLEAR: confirmation and removal of all HRPs.

SEND

SEND transmits the stored HRP coordinates to ROS 2. After confirmation, the sequence is published as a ROS 2 message and path following is executed with the Nav2 stack.

SEND: confirmation, transmission to ROS 2, and robot path following.

System Implementation

Unity and ROS 2 communicate via ROS-TCP-Connector and ROS-TCP-Endpoint (TCP server on the ROS 2 side). ROS 2 and the Kachaka robot use the gRPC-based kachaka-api.

Coordinate alignment between Unity and ROS 2 uses HoloLens 2 QR code detection (Vuforia): a physical QR code at the ROS 2 map origin is matched to a virtual reference in Unity.

Path coordinates are stored in a JSON file (relative to the QR origin), loaded at startup, and overwritten on add or clear. During drawing, new waypoints are added when the cursor moves farther than a threshold (D_{\mathrm{th}}) from the previous waypoint (in the user study, (D_{\mathrm{th}} = 0.2,\mathrm{m})).

On the ROS 2 side, a custom node converts the received HRP into a Navigation2-compatible path; a custom global planner supplies that path to the stack. The final point is published as the goal pose, and a regulated Pure Pursuit controller computes velocity commands from the local path and lookahead.

Experiments

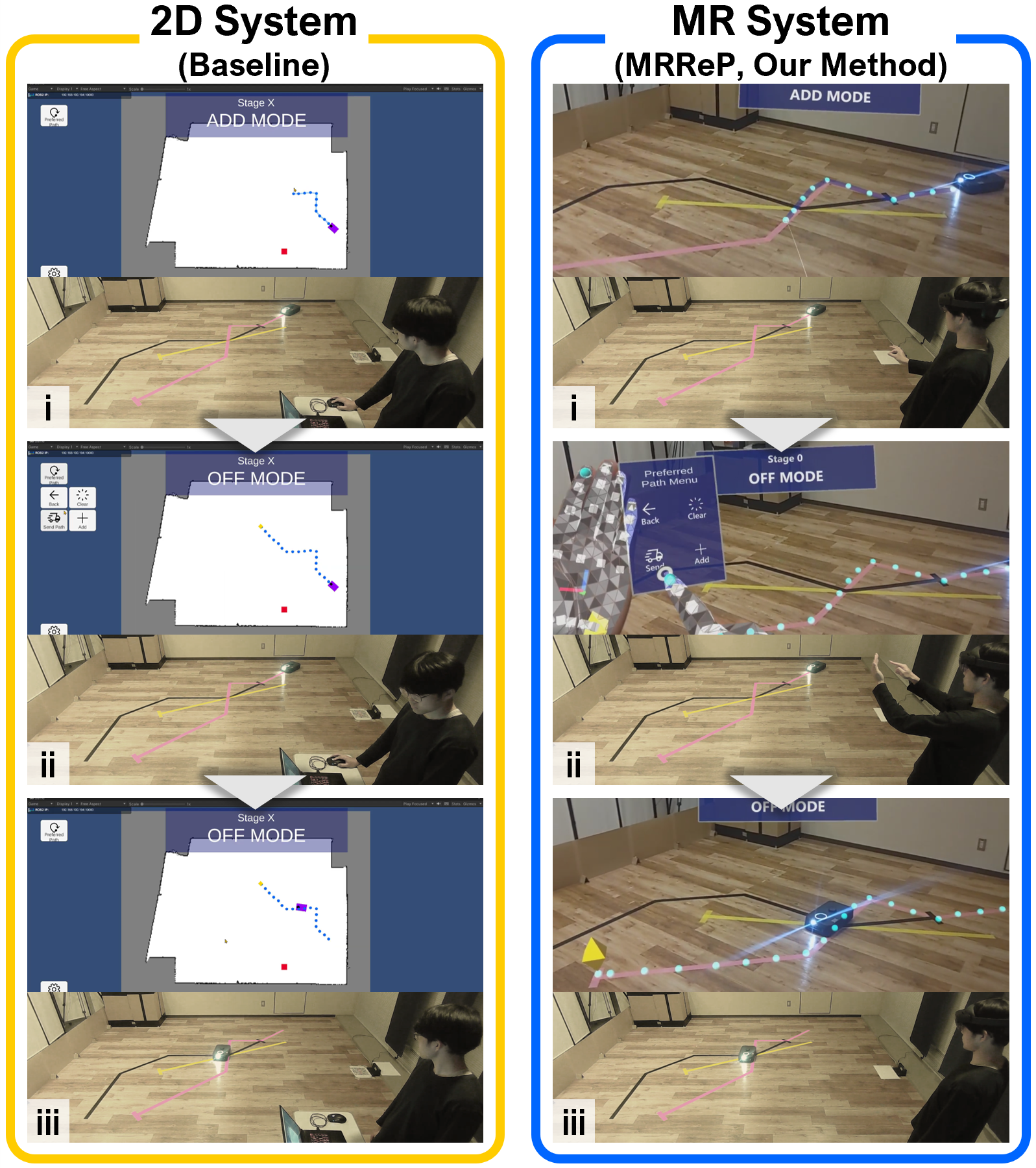

We conducted a within-subject study comparing MRReP (HoloLens 2, gesture drawing on the floor) with a 2D baseline (laptop, mouse on a 2D map). Both systems offered ADD, CLEAR, and SEND and shared the same navigation pipeline after submission.

Experimental environment: Stage A (straight), Stage B (piecewise linear with 45° turns).

Target paths: tape layout and corresponding paths on the ROS 2 costmap.

Task flow: path drawing, submission (Send), robot navigation.

Metrics matched the paper: hand-drawn path accuracy (spatial deviation of the HRP from the target path), number of drawing attempts, task completion time (start cue to path submission), path stability (paths and robot trajectories), and usability / cognitive load (SUS, NASA-TLX). Sixteen participants (10 male, 6 female, aged 19–28) completed both conditions with counterbalanced system order; each session included instruction, practice, main task, and a post-session questionnaire (about 80 minutes total per participant including consent, two 30-minute sessions, breaks, and a final comparative questionnaire). The environment included a practice stage plus Stages A and B; target paths were indicated by tape on the floor (practice: pink; Stage A: yellow, straight; Stage B: black, piecewise linear with multiple 45° turns).

Path accuracy and coverage

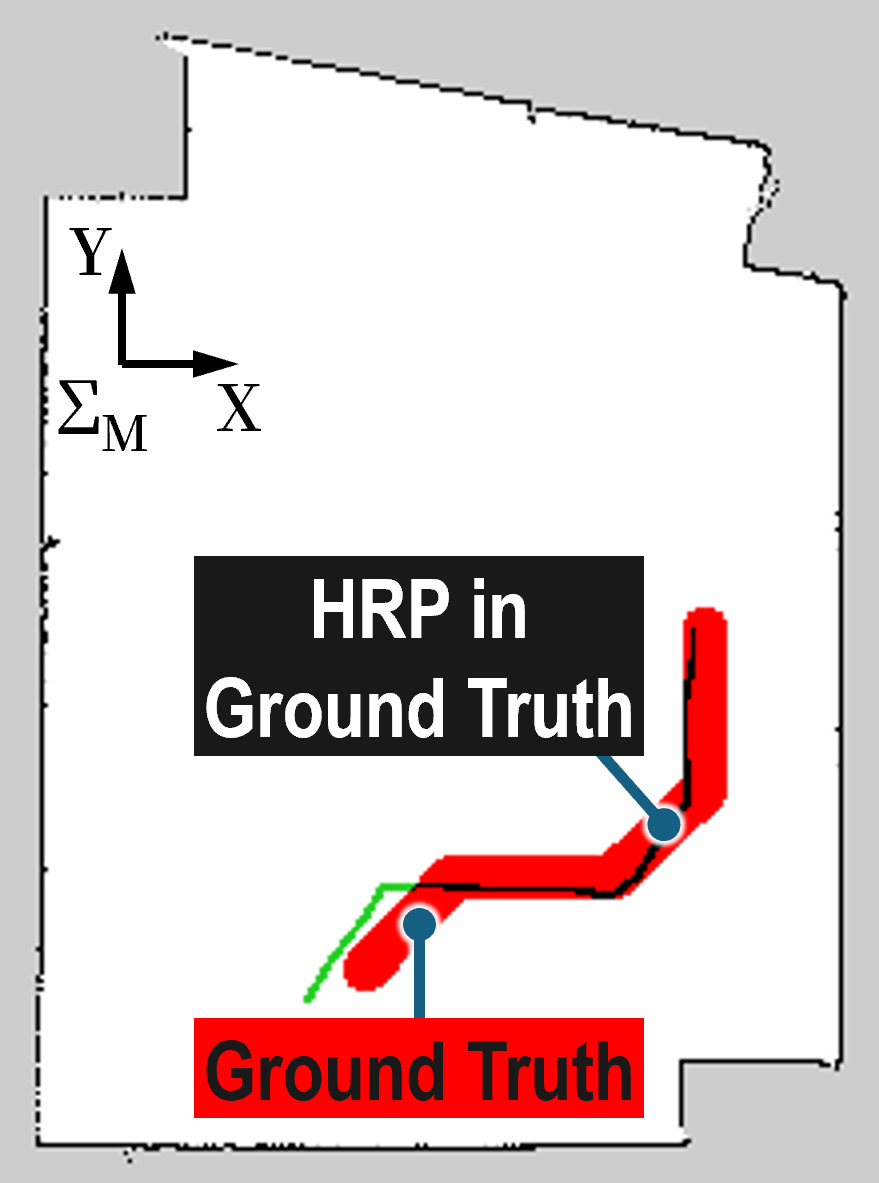

HRP and GT overlay

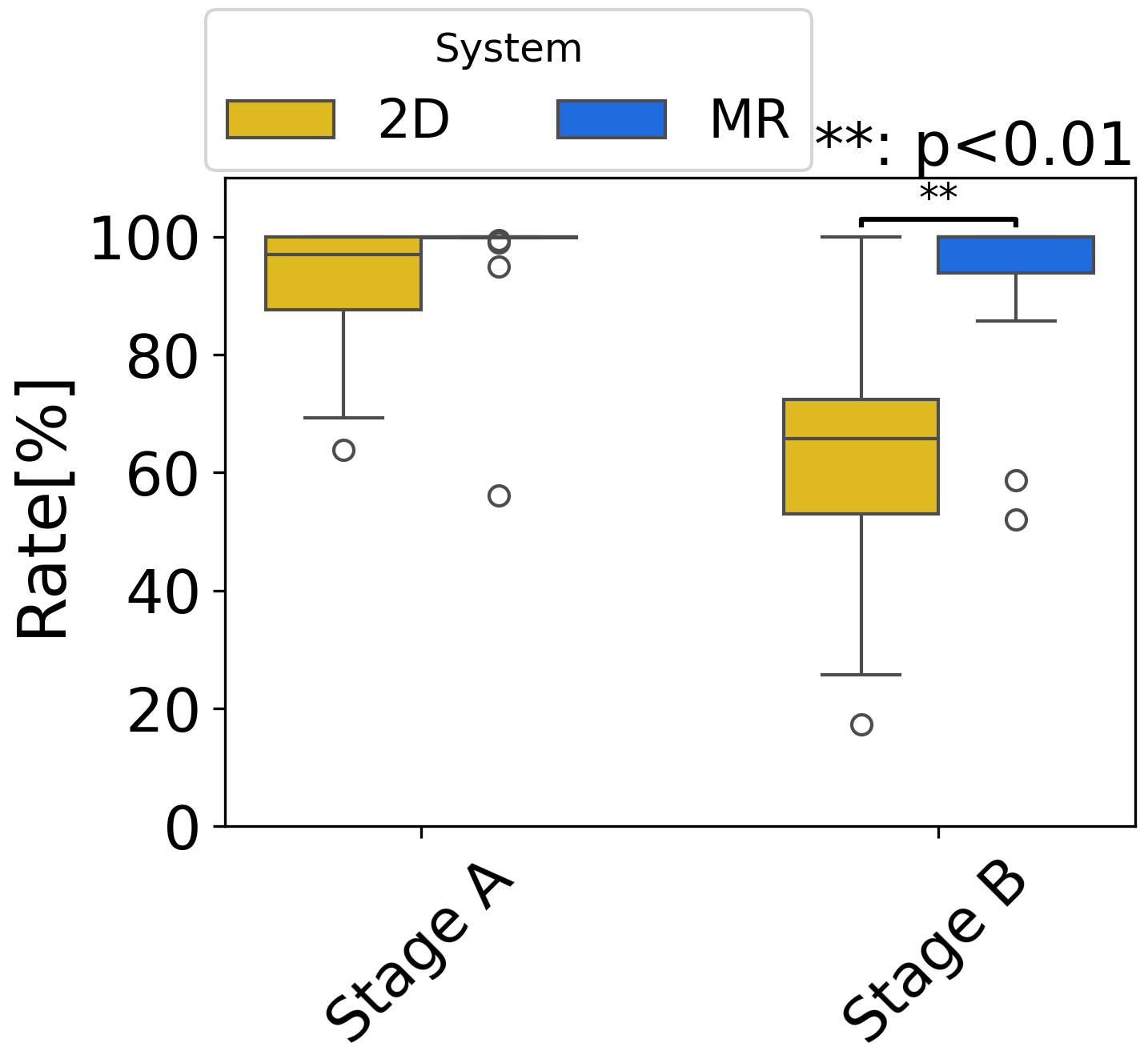

Percentage of HRP within GT

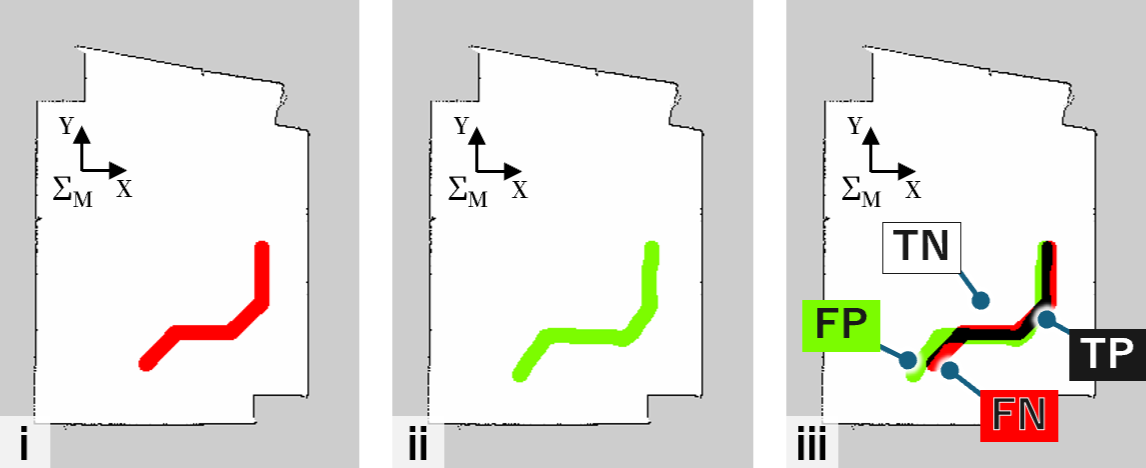

Pixel-wise comparison: TP / FP / FN / TN between GT and drawn region.

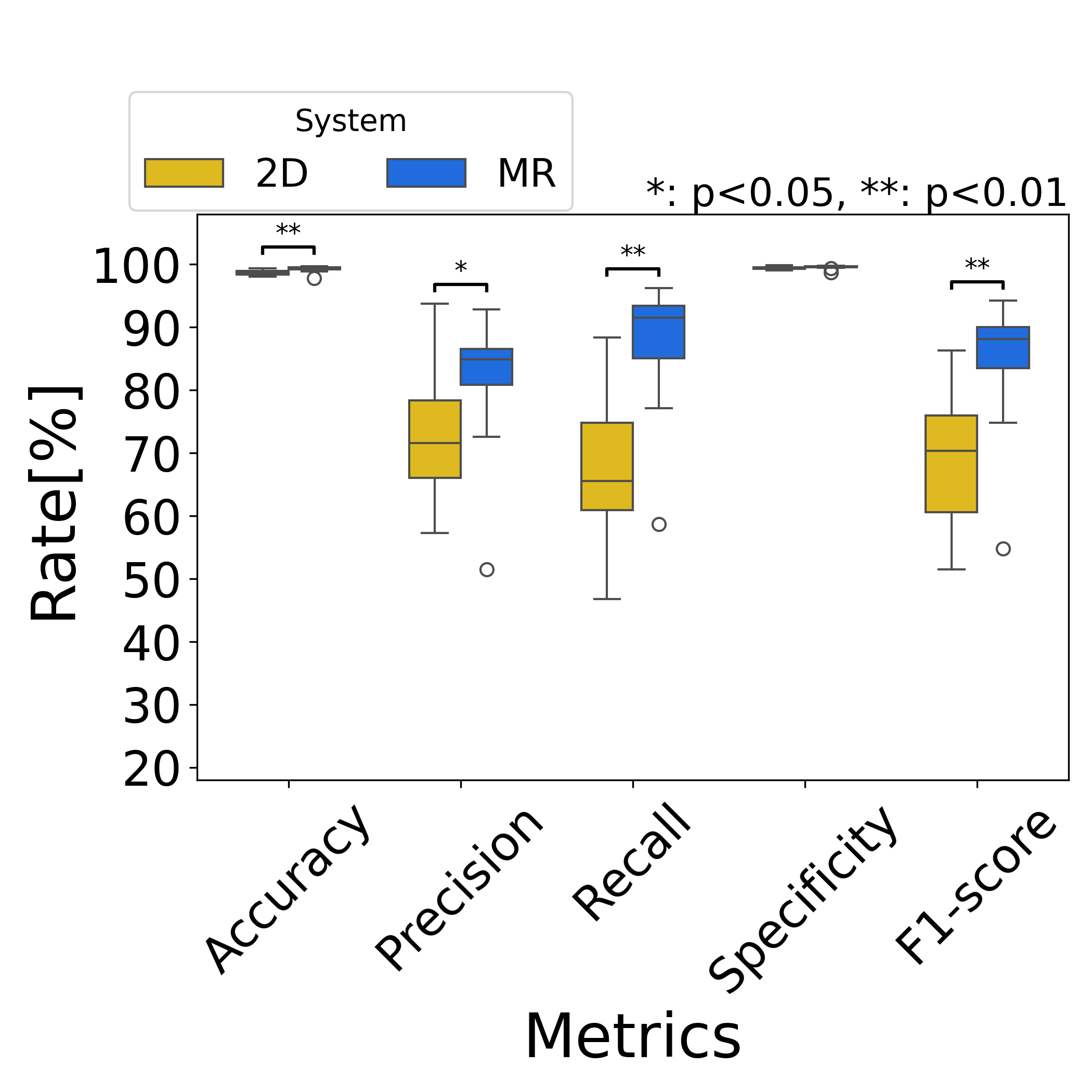

HRP accuracy metrics (Stage A)

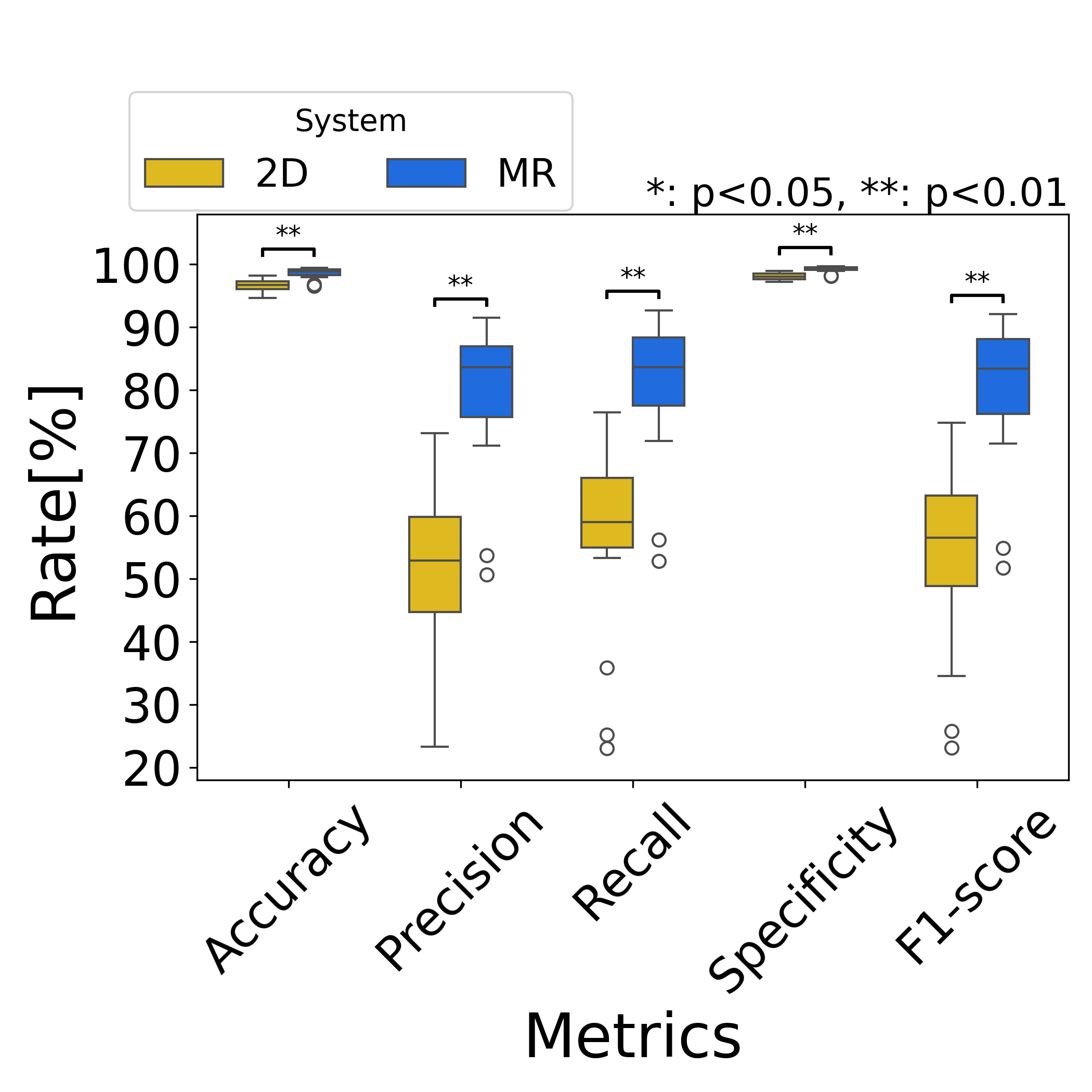

HRP accuracy metrics (Stage B)

MR achieved higher precision and recall than the 2D baseline (e.g., precision 84.9% vs. 71.6% in Stage A; recall 91.6% vs. 65.5% in Stage A), with strong gains especially in the more complex Stage B. Wilcoxon signed-rank tests were used for statistical comparison.

Drawing attempts and task time

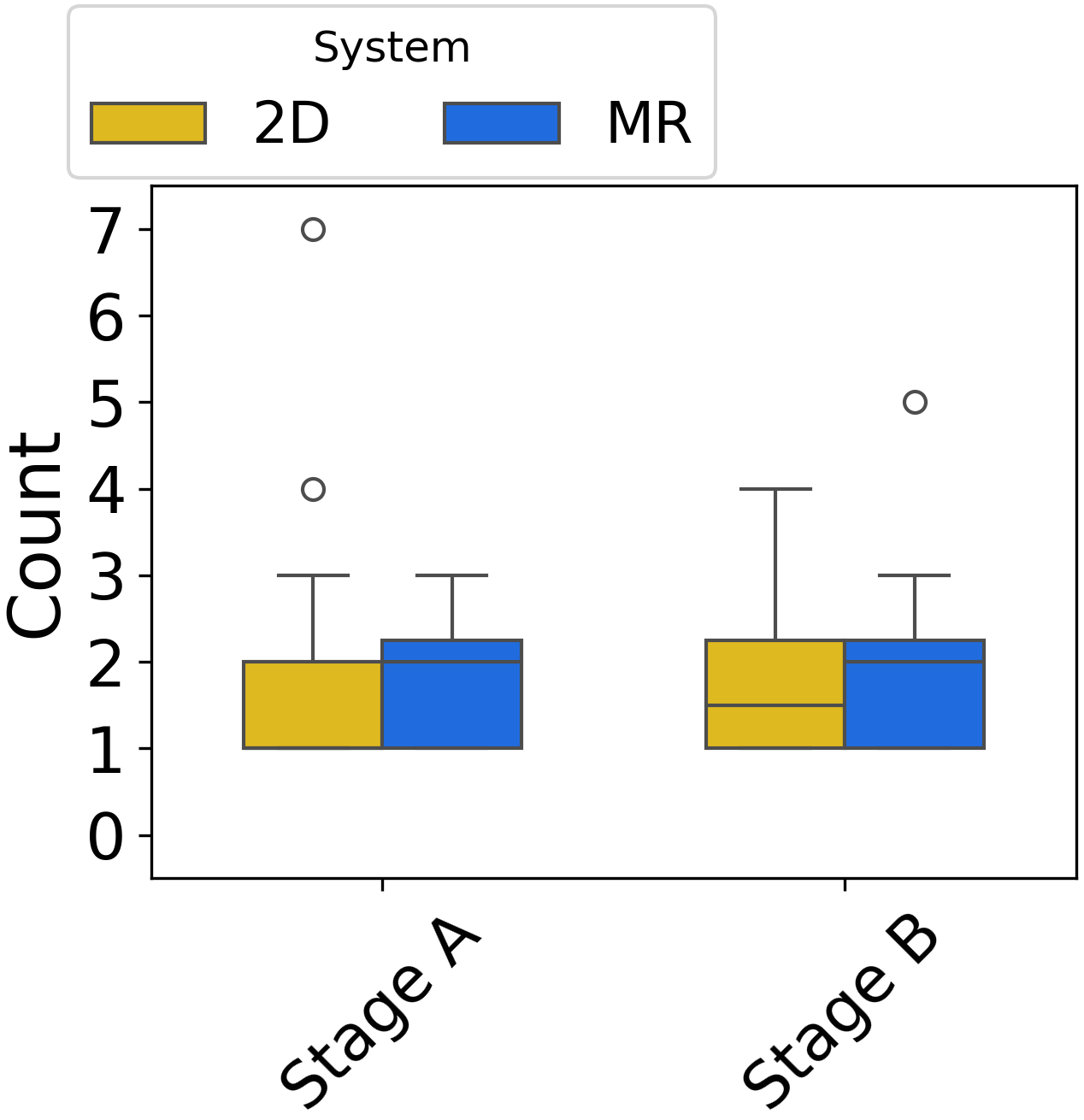

Number of drawing operations

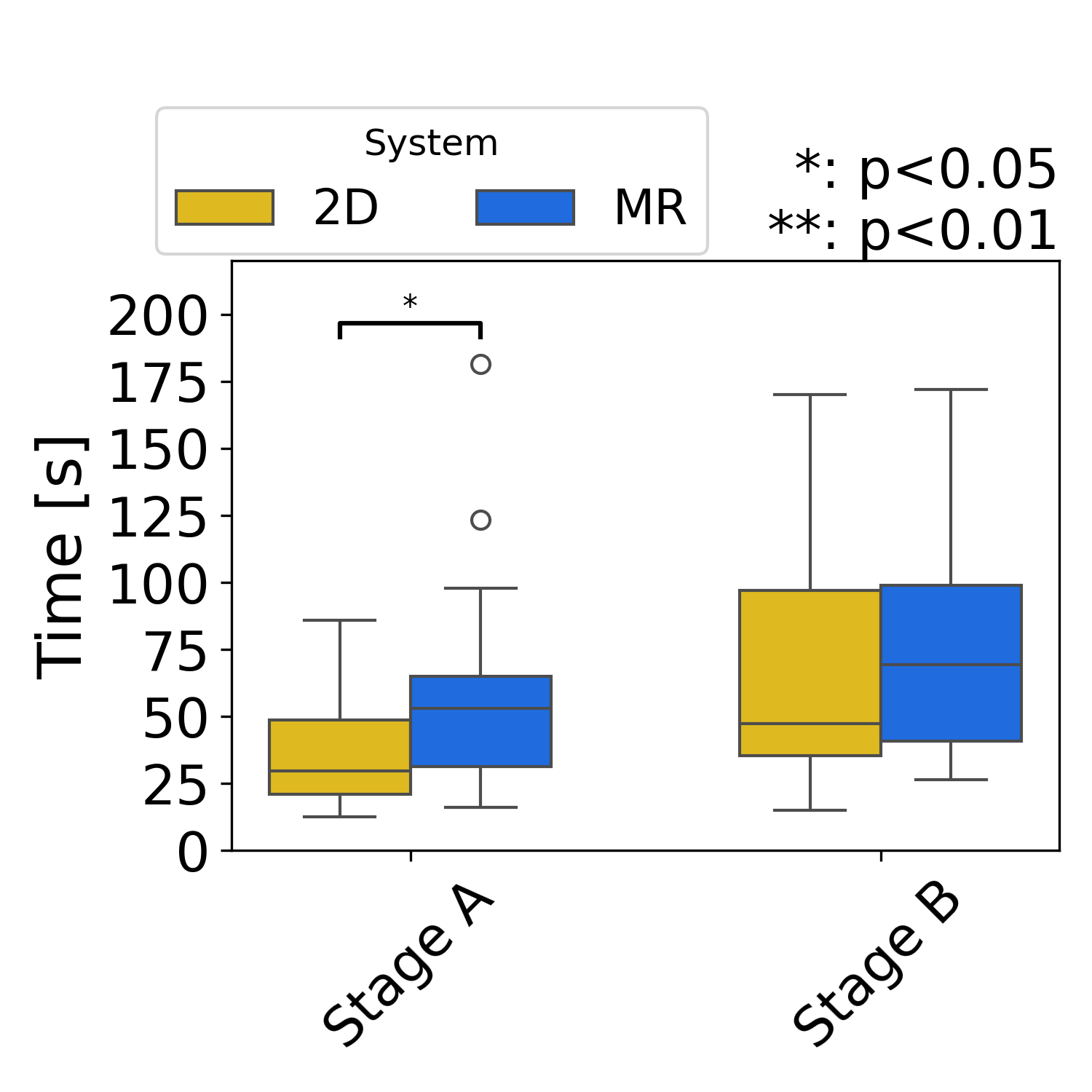

Task completion time (start to Send)

The number of drawing attempts did not differ significantly between conditions. Task completion time tended to be longer with MR, possibly due to finer adjustment and larger physical movement than mouse input.

Path stability

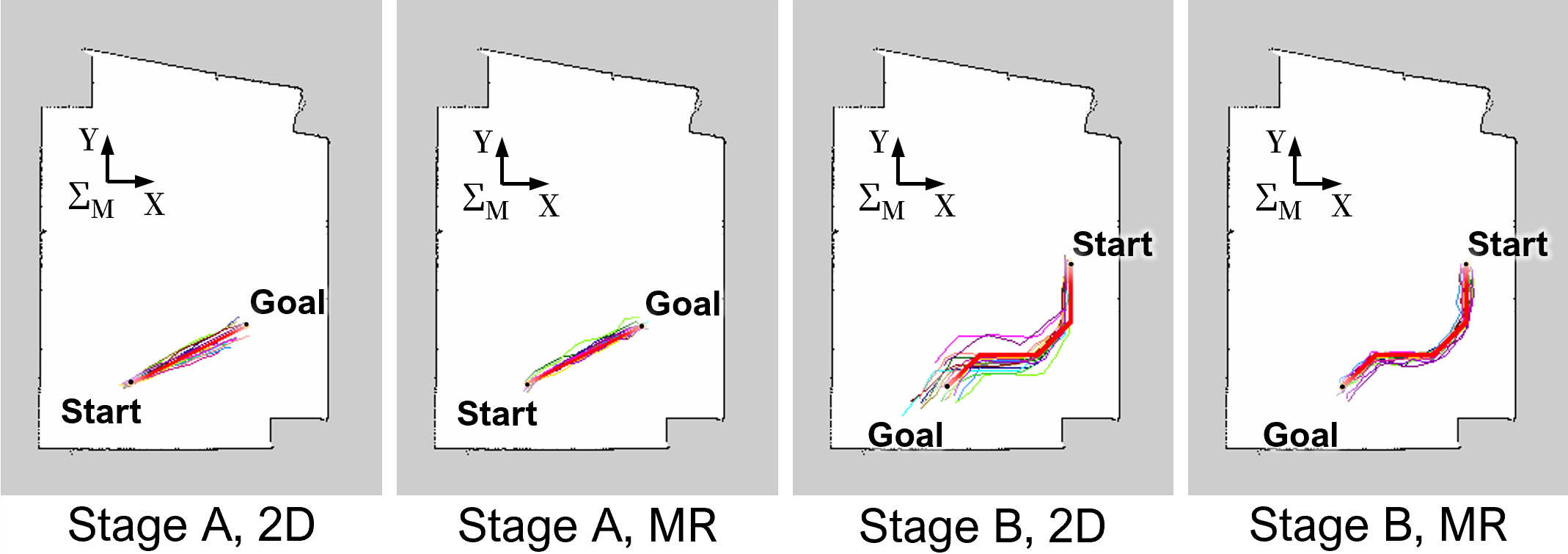

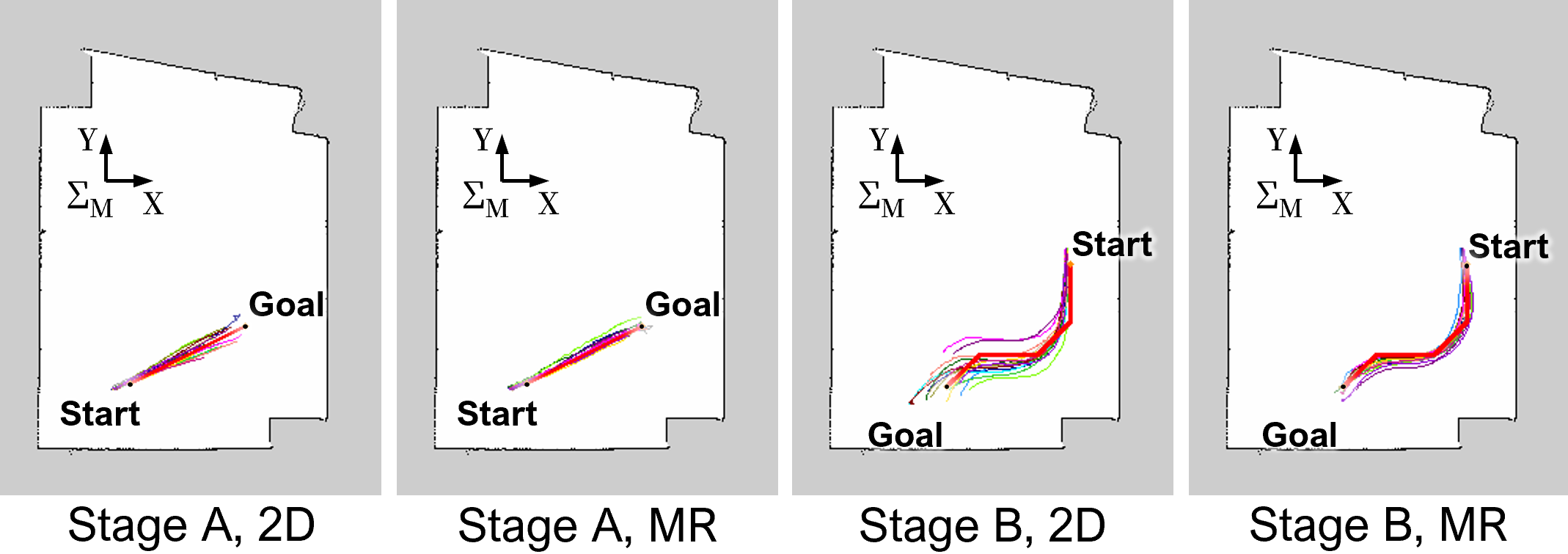

Global paths by condition and stage

Robot trajectories

The 2D condition often showed growing deviation from GT with distance from the start; MR showed smaller deviations and lower inter-participant variance, including for Stage B.

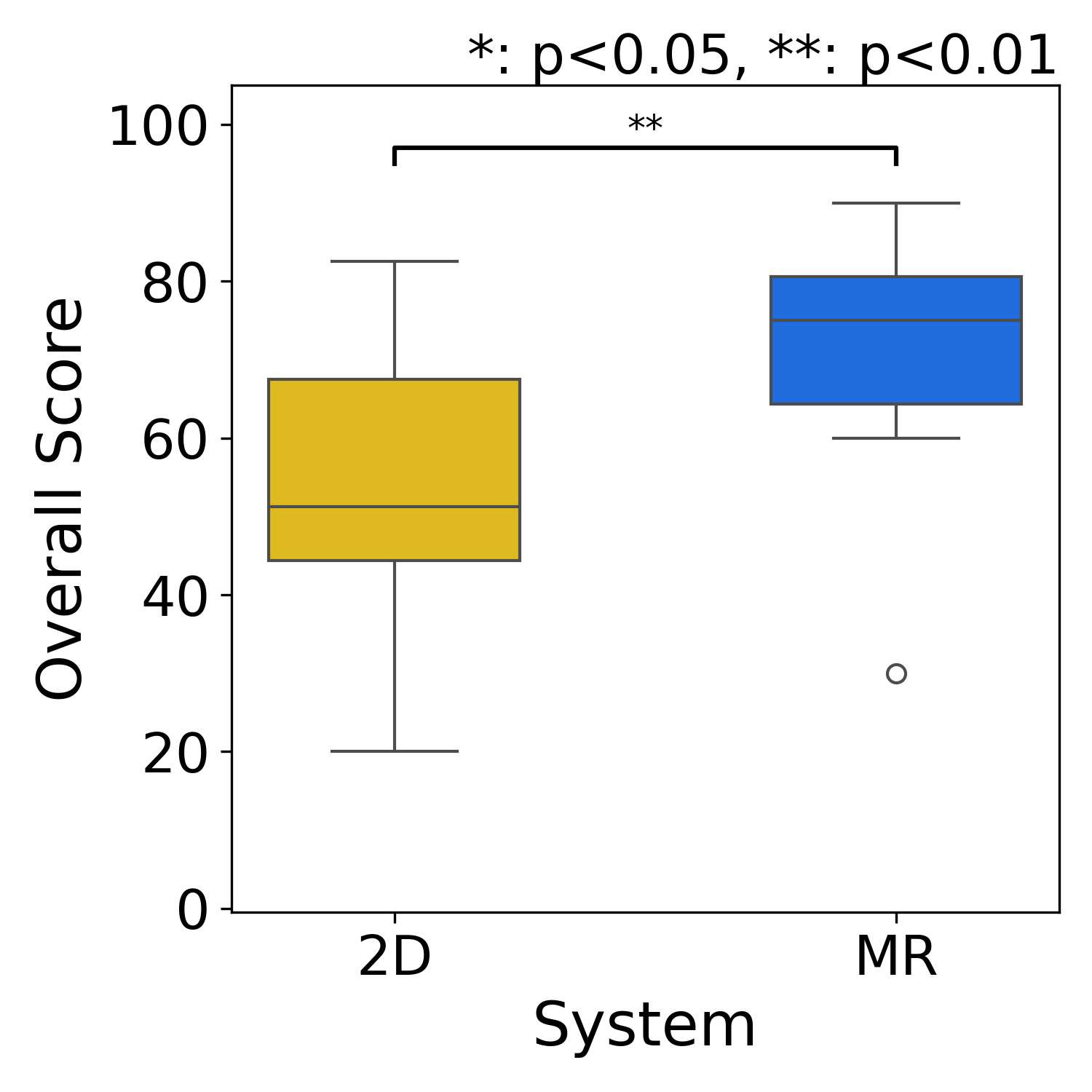

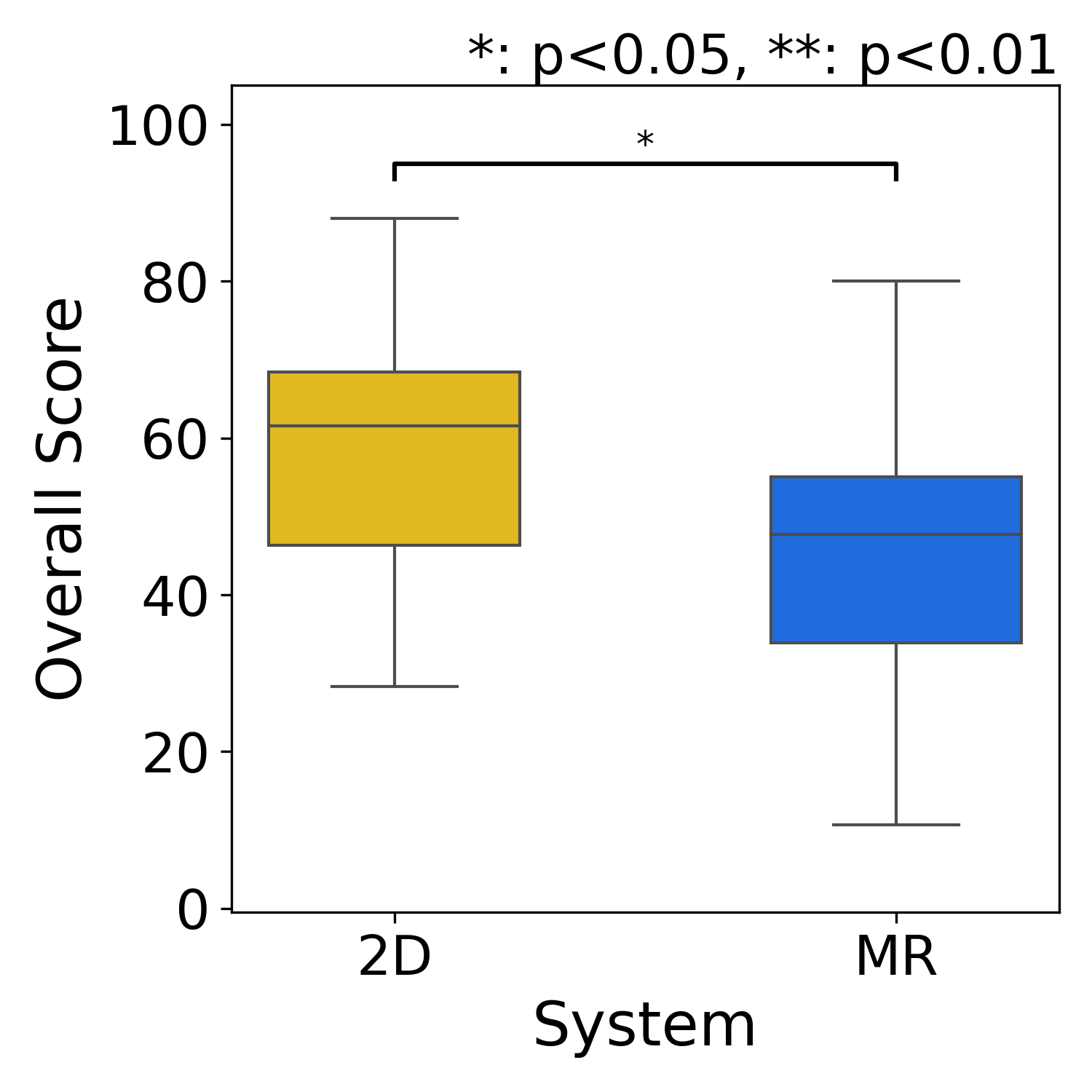

Subjective evaluation

SUS

NASA-TLX

MR yielded higher SUS scores and lower NASA-TLX scores than the baseline. Participants also reported MR-specific issues such as arm fatigue and gesture misrecognition.

These results support direct path drawing in MR as a way to encode human spatial intention for mobile robot navigation, with improved fidelity and usability despite longer interaction time in some cases.

Citation

@misc{taki2026mrrepmixedrealitybasedhanddrawn,

title={MRReP: Mixed Reality-based Hand-drawn Reference Path Editing Interface for Mobile Robot Navigation},

author={Takumi Taki and Masato Kobayashi and Yuki Uranishi},

year={2026},

eprint={2604.00059},

archivePrefix={arXiv},

primaryClass={cs.RO},

url={https://arxiv.org/abs/2604.00059},

}

Contact

Corresponding author

- Masato Kobayashi (Assistant Professor, The University of Osaka, Japan)

X (Twitter)

- English: https://twitter.com/MeRTcookingEN

- Japanese: https://twitter.com/MeRTcooking

LinkedIn: https://www.linkedin.com/in/kobayashi-masato-robot/