SpeeLo: Speech Interaction During Locomotion in Resource-Constrained Bipedal Robot

The University of Osaka / Kobe University

In bipedal robots, stable operation while running real-time locomotion control together with computationally heavy speech processing is a central design challenge. This work presents SpeeLo (Speech Interaction During Locomotion in Resource-Constrained Bipedal Robot), a distributed architecture and evaluation methodology for integrating spoken interaction into bipedal robots with limited onboard compute. A lightweight server on the robot handles locomotion control and audio playback, while speech recognition and related heavy processing are offloaded to an external client. Multi-turn dialogue history enables context-aware response generation. Experiments on physical hardware show that the proposed system has minimal impact on the locomotion control cycle and that conversational context improves response quality—supporting safe, practical speech integration under resource constraints.

Concept of SpeeLo: a bipedal robot engaged in speech interaction while walking.

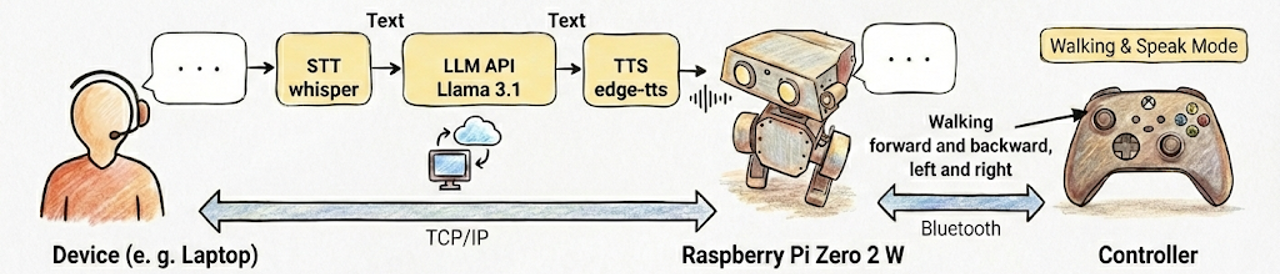

System architecture

We extend the open-source Open Duck Mini locomotion stack without altering its walking behavior, using a speech server on the robot and a speech client on an external device. The locomotion module launches the speech server in a background thread within the same process so the main control loop does not contend with speech-related compute on a single thread.

- On-robot: Locomotion control at 50 Hz, playback of audio received over the network, and exception handling for timeouts and disconnections.

- External client: Whisper for speech recognition (base), Groq API for response generation (llama-3.1-8b-instant), and edge-tts for Japanese speech synthesis. A bounded multi-turn buffer (up to 10 user–assistant pairs) is prepended with a system prompt on each inference call.

System overview: locomotion control and audio playback run on the robot; recognition, generation, and synthesis run on the external client. Dialogue history is kept on the client.

Platform and hardware

Experiments use the Open Duck Mini v2 platform. Onboard computing is a Raspberry Pi Zero 2 W running the closed-loop policy at 50 Hz. Sensing includes a BNO055 IMU, digital foot-contact inputs, and 14 actuated DoF (legs, neck, and head) via Feetech servos.

Experimental results (summary)

Locomotion control cycle (target ≤ 20 ms at 50 Hz)

Without speech interaction: mean 8.677 ms (std 0.221 ms). With speech interaction: mean 8.824 ms (std 0.734 ms). The difference in means is 0.147 ms (+1.69 %), indicating limited impact on the real-time loop under the distributed design.

Dialogue latency

Mean stage times: speech recognition 0.87 s (std 0.60 s), response generation 0.82 s (std 0.05 s), speech synthesis 1.19 s (std 0.29 s). End-to-end from recognition through audio transmission: 2.91 s on average (std 0.71 s), with heavy work isolated on the client.

Multi-turn context

With dialogue history, the system correctly grounds follow-up questions (e.g., weather asked after an earlier remark about rain) and stays consistent on attributes such as the robot’s name across turns.

Citation

@misc{kobayashi2026emolo,

title={EmoLo: Emotion-Inspired Expressive Locomotion via Single-Policy Reinforcement Learning on Low-Cost Bipedal Robots},

author={Masato Kobayashi},

year={2026},

url={https://mertcookimg.github.io/speelo/},

}

Contact

Corresponding author

- Masato Kobayashi (Assistant Professor, The University of Osaka, Japan)

X (Twitter)

- English: https://twitter.com/MeRTcookingEN

- Japanese: https://twitter.com/MeRTcooking

LinkedIn: https://www.linkedin.com/in/kobayashi-masato-robot/